Faceted navigation best practices

Consumers want to find exactly what they’re looking for quickly and easily when they land on any website. It could be a t-shirt in a specific size and colour, train ticket prices for a certain day and time, or a particular car repair service at a garage.

Faceted navigation – also known as faceted search or guided navigation – enables this by using product metadata to refine search queries and category listings. Implementing it is a great way to enhance user experience, which can help improve conversions and search performance.

Most ecommerce and enterprise sites use faceted navigation to help users reach the products they want faster. It’s great for users, but it can lead to SEO problems by creating tens of thousands of similar page versions.

If faceted navigation isn’t implemented correctly or doesn’t adhere to best practices, your site could waste significant crawl budget, create duplicate content, and cause index bloat. Understanding and following best practices for faceted navigation avoids these risks and boosts performance in both SEO and user experience.

What is faceted navigation?

Faceted navigation is a filtering system that enables website users to refine their search using specific attributes. The technique applies various filters to a large dataset, customising the results and narrowing them by attributes. Examples include price, colour, size, or brand of a clothing item.

Facets are intelligent layers that extend a site’s primary categories. They create a custom navigation or search option based on the user’s specific requirements, enhancing the user experience. You’ll typically find faceted navigation within sidebars on ecommerce sites, where users can filter product listings to their specifications.

How does faceted navigation work?

Faceted navigation narrows items on a site’s listing page by selecting multiple and specific attributes. Normally, this is through filters or checkbox attributes. The chosen filters dynamically change what a page displays by appending parameters to the URL.

It assigns a unique value to each selection, and these values are passed via URL parameters to modify the page’s content. The technique uses backend data assigned to each product or URL and filters them in real-time to deliver results based on the filters.

This dynamic filtering creates custom views for the user, rather than a rigid hierarchy where you’d have tens of thousands of static URLs for every small product variant. However, it still generates many similar URLs. For example:

- Rigid URL: www.example.com/shoes/trainers/running/black/size-11

- Faceted navigation: www.example.com/shoes/?colour=black&size=11

SEO faceted navigation benefits and problems

Benefits of faceted navigation

The less work consumers need do to find the precise items they want, the more likely they are to make a purchase, generate a lead, and return to your site. Faceted navigation reduces friction and customer frustration, creating a smooth user experience (UX) that’s highly beneficial for ecommerce SEO.

It streamlines the customer journey from awareness to comparison and transaction. Making the experience quick and easy without having to click through lots of rigid URLs achieves this, as well as boosting conversions and customer loyalty.

There are also several SEO benefits of faceted search, including:

- Ranking for long-tail keywords – Faceted pages can align with long-tail search queries with lower keyword difficulty but higher intent. This can improve traffic, too.

- Expanding your organic footprint – Strategically indexing high-value faceted pages can increase your visibility and potential ranking opportunities for hundreds and thousands of keyword variations, rather than relying on singular category pages.

- Improving SEO metrics – Longer time on site, low bounce rates, and high engagement levels signal to search engines that the content is high quality and helpful, which can boost rankings.

Faceted navigation SEO problems

Faceted navigation generates tens of thousands of similar dynamic URLs, which search engines will treat as duplicate pages if left to decide for themselves. This can occur when all facets and filters are crawlable and indexable, which wastes crawl budget and dilutes internal link equity.

As the number of parameters increases, so will the number of pages that are close to being duplicated. This limits exposure within search. Cumulatively, it also means your site risks keyword cannibalisation issues, so your pages compete with each other within SERPs.

If Google realises this, it could end up discounting a page you want to prioritise and rank over one you don’t. Avoiding faceted navigation SEO problems is necessary for any ecommerce site to do well in traditional search and AI search.

Faceted search best practices

The benefits of faceted search for user experience mean implementing it is important, but the risks to your SEO campaigns remain. Knowing the best way to do faceted navigation helps introduce the technique to your site without harming your visibility and SEO performance.

Faceted search best practices help mitigate the risks and achieve both customer and marketing success.

Implement canonical tags

Canonicalisation is a great way to direct search engines to the preferred version of similar pages – the one you want to appear for users after a search. Adding a canonical tag to facet URLs that point back to the category page helps consolidate link equity to preferred pages and may improve their rankings.

This stops the filtered URL from competing with the category page or product page and reduces the risk of duplicate content. Careful analysis of your filters helps identify any combinations that may be valuable though, as some may benefit from an indexable URL and require a self-referencing canonical.

However, it’s important to note that wasted crawl budgets may still occur. Canonical tags are also suggestions, so search engines can ignore them if they think they’re implemented incorrectly. Many sites choose to implement canonical tags with another solution to protect against SEO performance risks.

Use clean and clear facets

It can be tempting to enable lots of filters when introducing faceted navigation, so users can refine their search to the most specific option. However, you don’t want to overwhelm or confuse potential customers and search engines. Stick with a clear structure that has:

- User-friendly labels that are instantly understandable and match customer needs. Avoid technical terms or jargon that can be confusing. For example, stick with common colour options if filtering t-shirts by shade.

- Simple facets that narrow a search quickly. Don’t add 30 different filter options, as this can overwhelm users and generate thousands of unnecessary facet URLs.

- Clean URLs that are readable, where the platform allows this. Try to avoid messy and long query strings, if possible, as these can be off-putting for users and more complex for search engines.

Optimise your robots.txt

If you need to block URLs due to faceted navigation, implementing a disallow for specific sections of the site can provide a fast, customisable solution. This helps when you face crawl budget issues, as you don’t need all the faceted URLs to be crawled.

The risk is that it’s only a directive rather than an enforcement. Search engine spiders might ignore them, although this is rare.

To optimise your robots.txt, set a custom parameter to designate all the filter combinations and facets that you need to block and add it to the end of each URL string. Then disallow all URLs that contain this parameter in your robots.txt file.

If you want Google to crawl your robots.txt file faster, use the robots.txt Tester tool to notify the search engine of your changes.

Apply noindex tags

Pages with external links or canonical tags pointing to them can still be indexed, so you might want to combine disallow with a noindex tag at the page level. Use “noindex” meta robots tag in the <head> tag to prevent facet pages being indexed.

A noindex tag alone doesn’t stop your pages from being crawled, which means that your budget is still wasted. Therefore, combining page level noindex with a disallow command should prevent pages from appearing in the index and being crawled.

This is useful when faceted navigation has gone wrong and you need to clean up duplicated parameter URLs from search engines.

Use Google Search Console

The coverage report within Google Search Console shows indexed versus excluded pages. This helps you quickly identify any excessive indexing for faceted or filtered URLs and accidental blocking of important pages.

Look for any spikes in indexed pages, especially after faceted navigation implementation, as this might be an example of uncontrolled URL proliferation. Check if there are any parameter combinations present that you don’t want to be crawlable, as this could highlight a faceted navigation problem

This only shows indexing information for Google, not for other search engines such as Bing, Yahoo, or DuckDuckGo.

Apply nofollow within internal links

If you need to curtail crawl wastage, there’s also the option to nofollow internal links to facets that you don’t want bots to crawl. Use rel=”nofollow” on internal links to faceted URLs you don’t want to be crawled.

However, nofollow is a hint and not a directive, so Google may not always abide by it. This also won’t resolve the issue entirely, as duplicate content will still be indexed and link equity will remain affected.

Use AJAX when working on a new website

AJAX applications inhibit the creation of new URLs when users apply filters with faceted navigation implementation. The sourcing happens without involvement from the web server.

When using this option, you’ll need to ensure that a crawl path is accessible to the pages and that search engines can access every necessary page.

The catch is that you can only apply AJAX to a new site. So, unless you’re undergoing redevelopment, this won’t be a solution that applies to you.

Faceted search examples

Many popular digital commerce platforms enable faceted search abilities for businesses that build their ecommerce and enterprise sites using them. The way these appear and operate can vary depending on the chosen platform.

Common faceted search examples include the ability to narrow a clothing item by attributes such as its colour, size, and brand, as well as refining a hotel search for a holiday by its location, rating, and amenities (like a swimming pool or breakfast included).

These are some faceted navigation examples used by brands across various digital commerce platforms.

Adobe Commerce

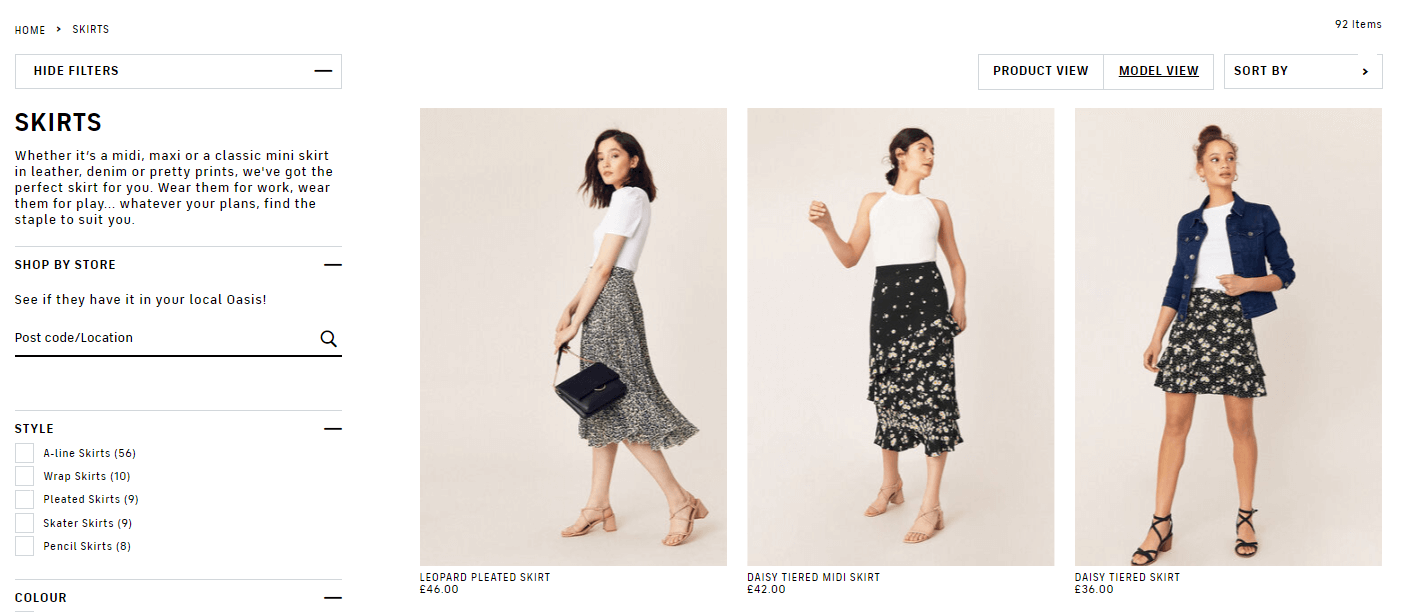

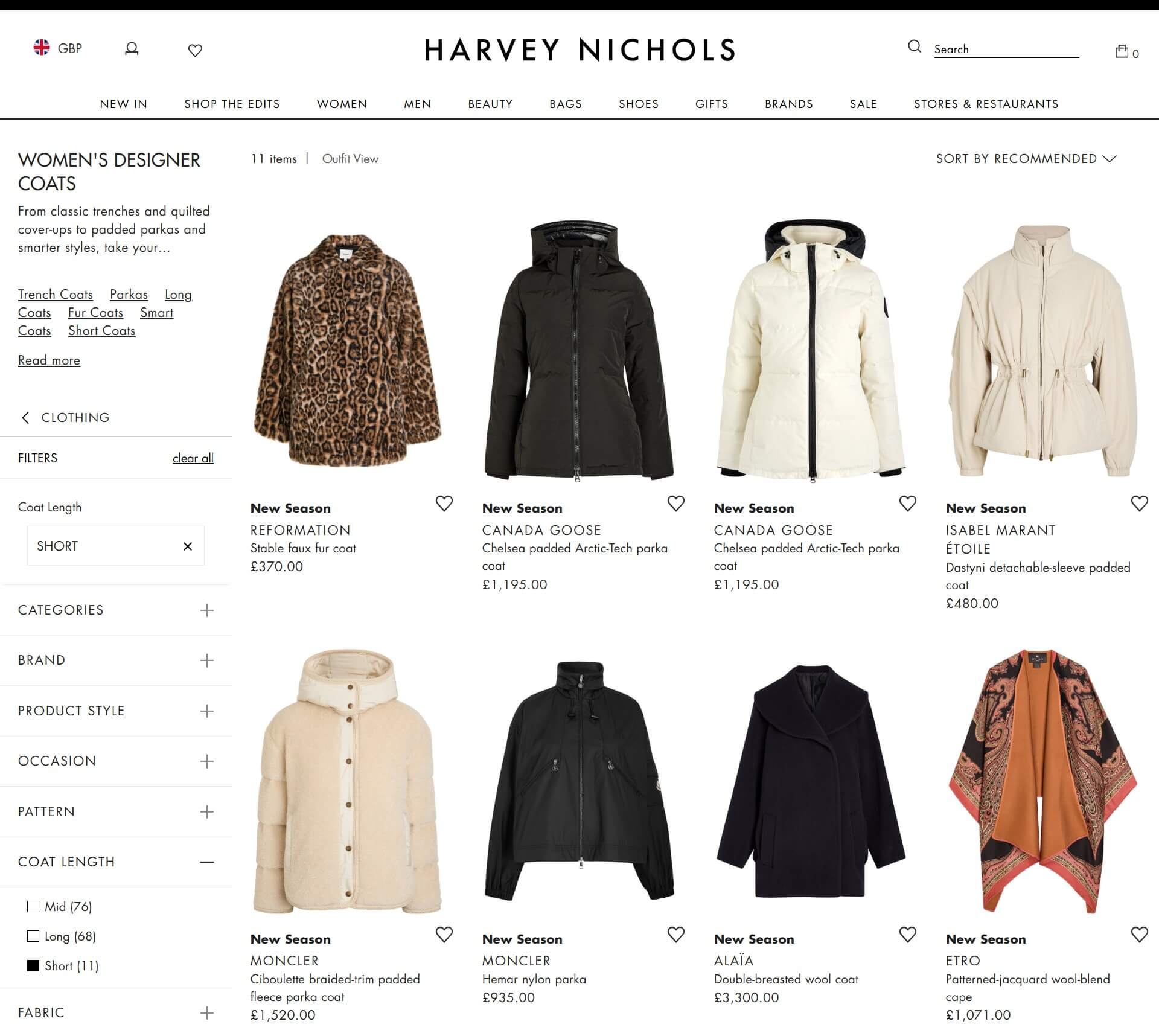

The luxury department store Harvey Nichols uses Adobe Commerce to power its online shopping experience. Its site has an extensive navigation bar with a drop-down menu for the many categories within its designer clothing and beauty range.

Using faceted navigation across the Harvey Nichols site is simple. Browse category pages and then select from the filter options on the left sidebar. Some may lead to a new subcategory page, but others create a faceted search URL.

For example, on the women’s coats category page, filters lead to subcategory pages for items such as parkas and trench coats. Using the faceted search options though, you can filter the type of women’s coats by attributes including the pattern, coat length, size, colour, fabric, and more. These create faceted URLs.

Salesforce Commerce Cloud

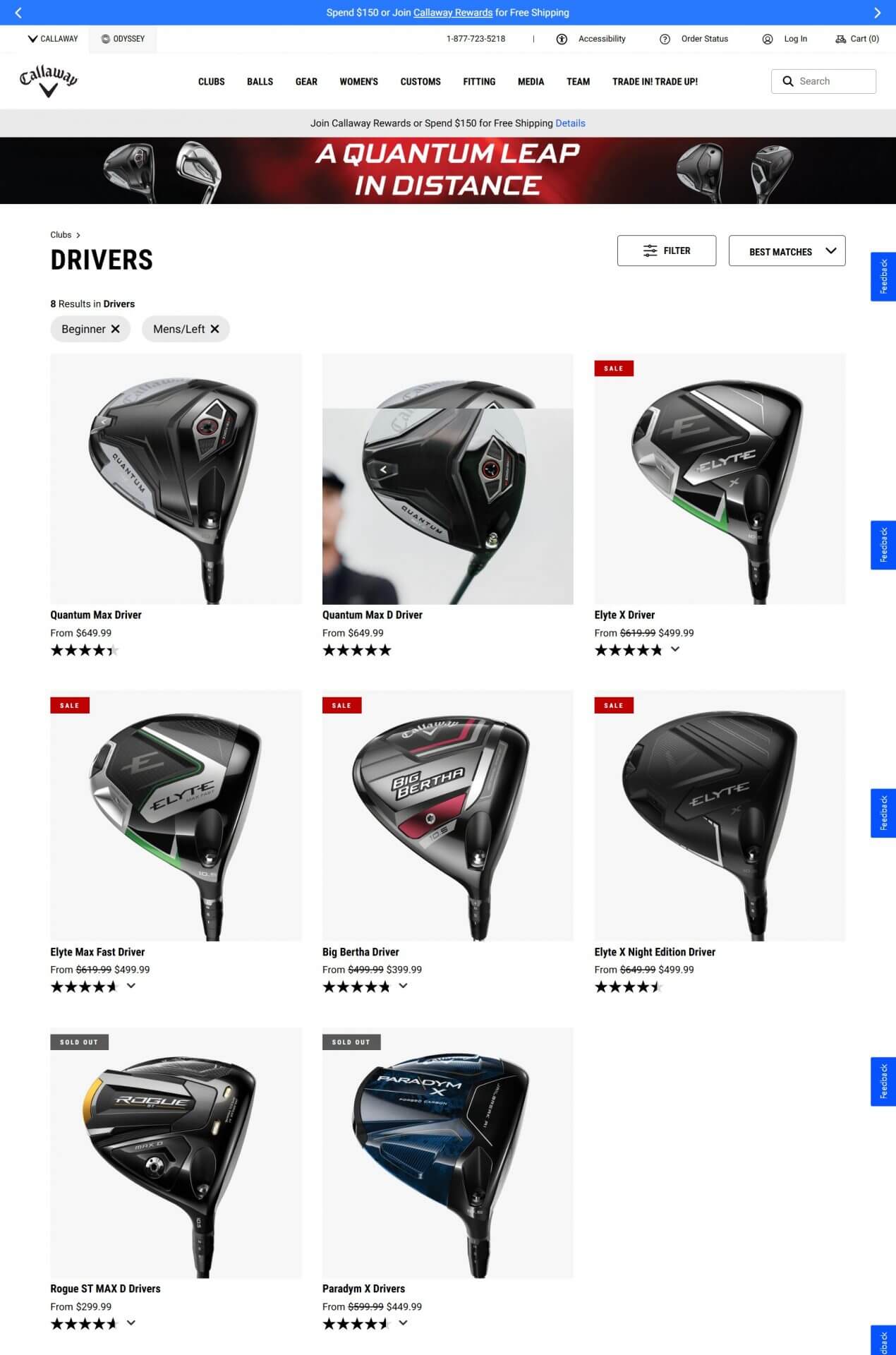

Callaway Golf is built on Salesforce Commerce Cloud. The brand sells a wide range of golfing equipment online, segmented into categories in its navigation bar that break down into subcategories and product listings.

Many category pages and product listings include a filter option in the top-right corner that enables faceted navigation. The filters depend on the product type but can include attributes such as price, gender/hand, skill level, size, and club type.

Users can combine more than one filter, even within the same attribute. For example, on the golf shoes category page, you can use faceted search to filter by sizes 10 medium, 11 wide, and 11.5 medium at the same time for extensive options. This can create thousands of faceted URLs.

Shopify

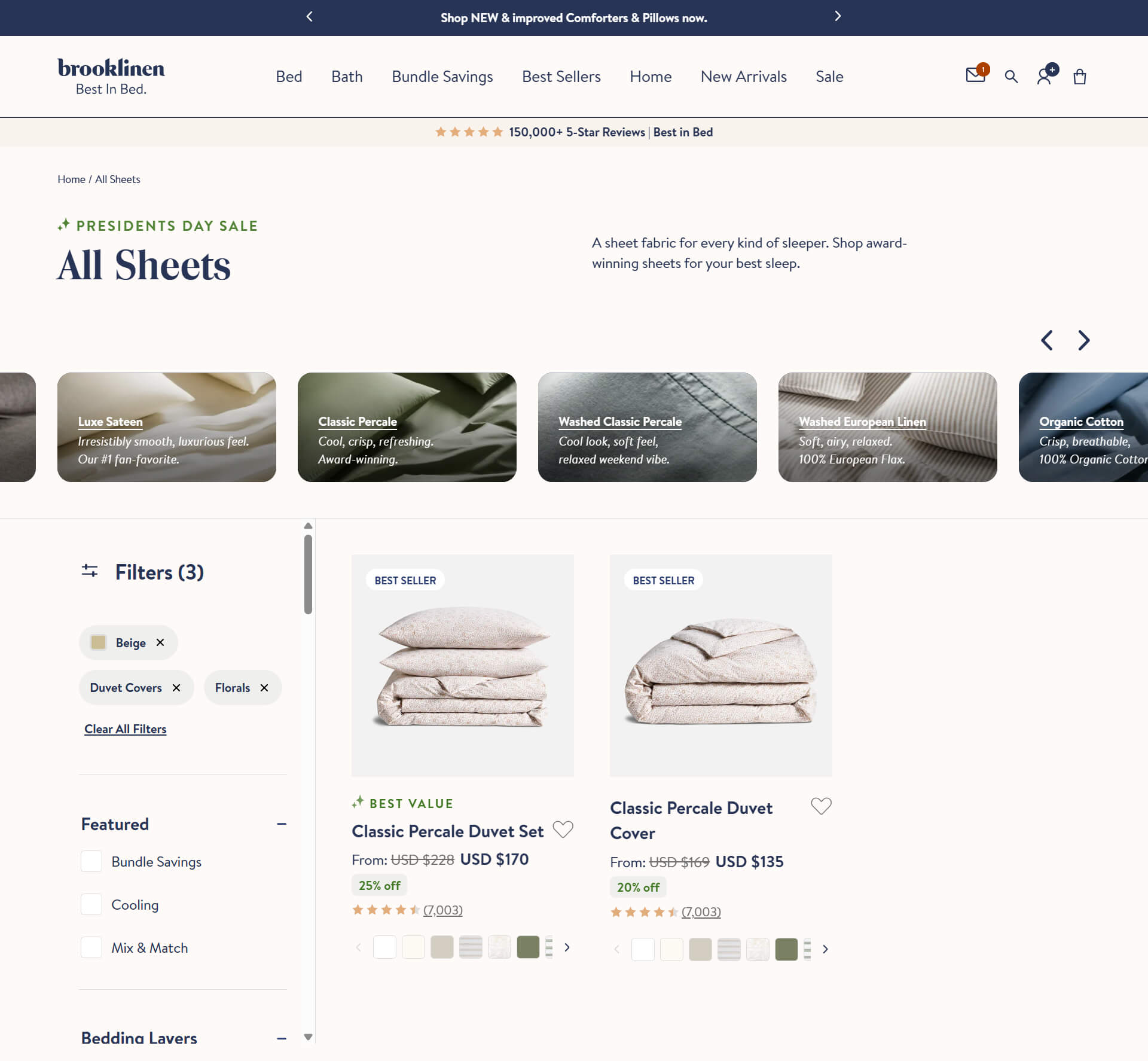

Brooklinen is a bedding and home goods Shopify store. It sells a wide range of bedding, sheets, bath goods, and other items. These are separated into common collections such as pillowcases, duvet covers, and throws.

There are thousands of products across the site, so Brooklinen uses Shopify faceted navigation to help browsers quickly and easily refine their search. Collection pages have a filter option down the left-hand side that includes attributes such as colour, size, pattern, fabric, and product type. Exact filters vary depending on the category or product listing.

This product-attribute faceting allows you to apply multiple filters at once. Without Shopify faceted navigation, this would generate tens of thousands of URLs for every specific filtered option, leading to a large and complex site.

The AI impact on faceted search

The use of AI is changing faceted search and removing some of the manual actions for users when shopping online for products and services. While the traditional approach of searching for a product, visiting a category page, and filtering by attributes is still widely used, AI search enables all of this in one place.

Many large language models (LLMs) and AI tools do the legwork of filtering and refining searches for products themselves. They can identify and apply filters to surface specific products directly with no need for the user to navigate through them on an ecommerce site.

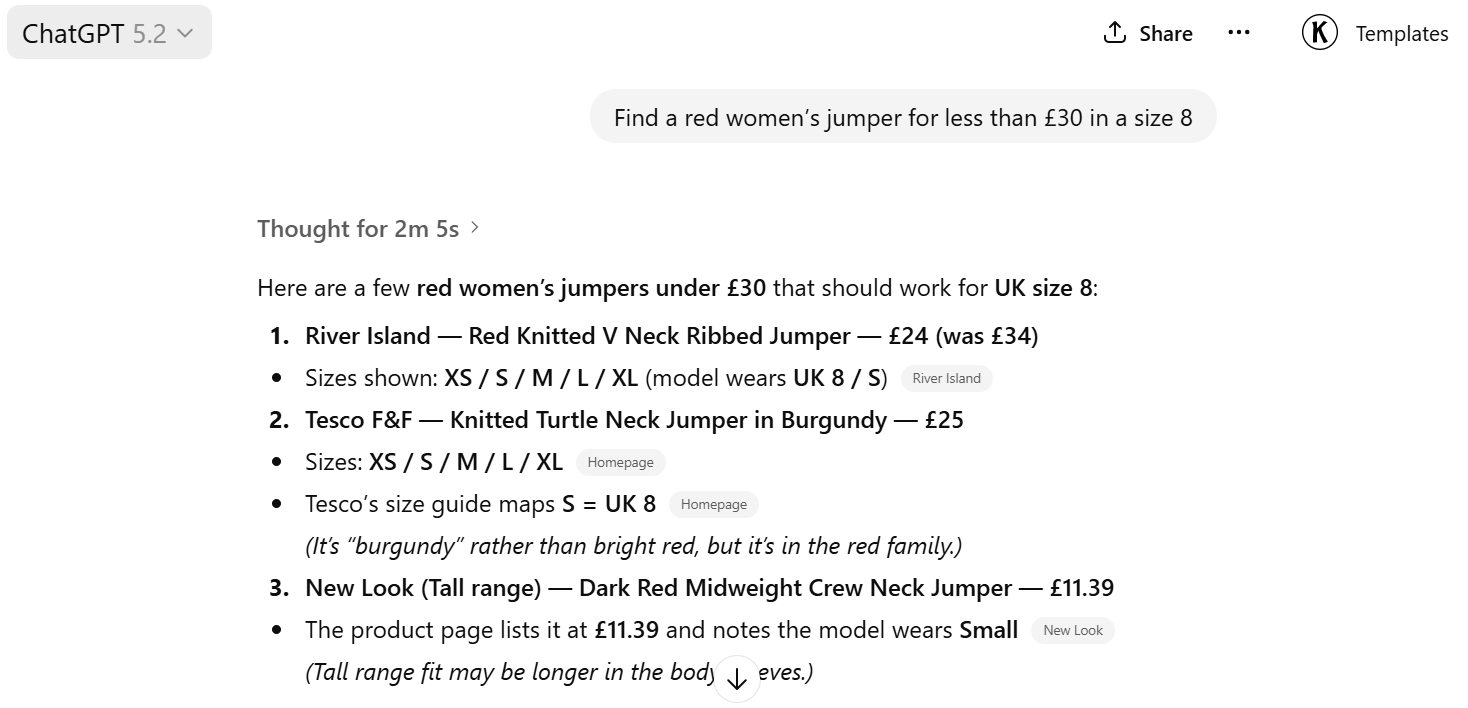

For example, users can ask an LLM directly to find ‘a red women’s jumper for less than £30 in a size 8’. This applies numerous facets in one go, and with the evolution of shopping through AI tools, it presents various options from across different ecommerce brands.

But rather than reducing its importance, the growth of AI further drives home the need for faceted navigation. AI tools can crawl faceted URLs and filter options across ecommerce sites on behalf of users, so implementing them correctly remains important. AI is moving faceted navigation from manual to self-optimising discovery paths.

Implement SEO-friendly faceted search on your site

Resolve indexing issues, page equity dilution, and crawl waste when implementing faceted navigation on your site to maintain and improve your SEO performance. As with any element of technical SEO, you must take great care choosing the options best for your site.

At SALT, we can help ecommerce and enterprise websites of any size and industry ensure that any faceted search options operate in an SEO-friendly manner. This includes expert analysis, recommendations, and actions to maintain and enhance your site’s performance.

Get in touch to discuss your project and find out how we can help.